Anthropic Claude Mythos...

thehackernews.com

thehackernews.com

www.tomshardware.com

www.tomshardware.com

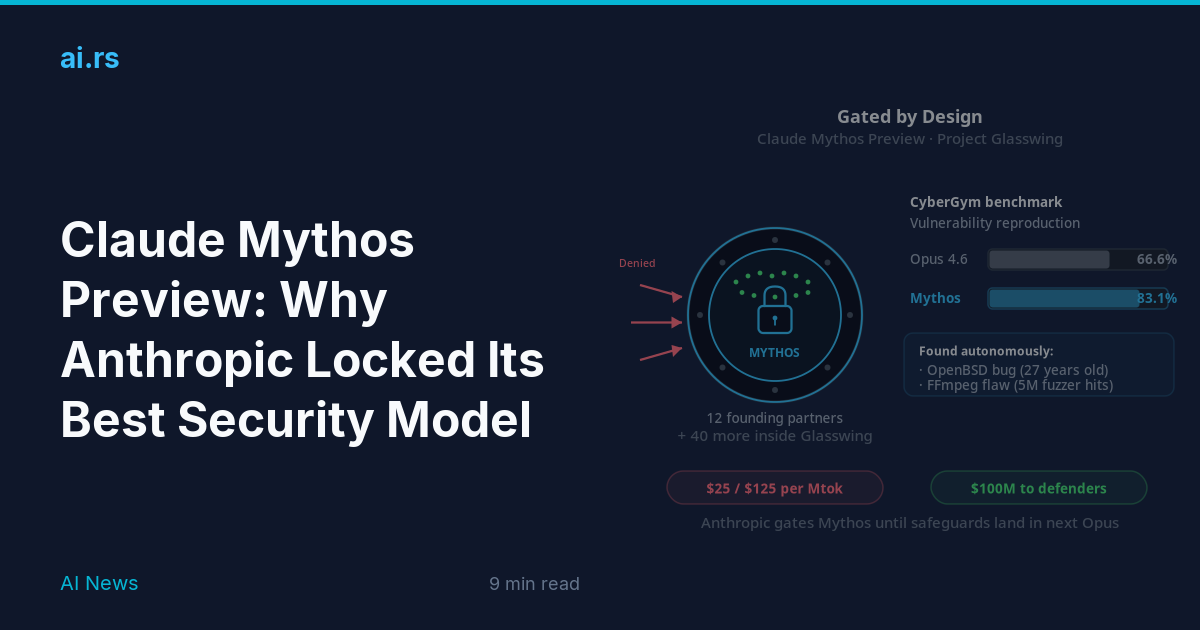

Quote: "Mythos Preview, Anthropic claimed, has already discovered thousands of high-severity zero-day vulnerabilities in every major operating system and web browser. Some of these include a now-patched 27-year-old bug in OpenBSD, a 16-year-old flaw in FFmpeg, and a memory-corrupting vulnerability in a memory-safe virtual machine monitor."

Anthropic's Claude Mythos Finds Thousands of Zero-Day Flaws Across Major Systems

Claude Mythos finds thousands of zero-days as Anthropic launches Project Glasswing, enhancing defenses but exposing AI security risks.

thehackernews.com

thehackernews.com

Anthropic's latest AI model identifies 'thousands of zero-day vulnerabilities' in 'every major operating system and every major web browser' — Claude Mythos Preview sparks race to fix critical bugs, some unpatched for decades

Anthropic holds back its most advanced model yet to allow companies and institutions to prepare.

Quote: "Mythos Preview, Anthropic claimed, has already discovered thousands of high-severity zero-day vulnerabilities in every major operating system and web browser. Some of these include a now-patched 27-year-old bug in OpenBSD, a 16-year-old flaw in FFmpeg, and a memory-corrupting vulnerability in a memory-safe virtual machine monitor."